Do You Think You're Clever? (5 page)

(Physics and Philosophy, Oxford)

The answer is about 43. You can work this out very roughly, knowing that the distance to the moon is a little less than 400,000 km and that a thin sheet of paper is about 0.1 mm, or 0.000001 km. You could double 0.000001 until you reach approximately 400,000 or halve 400,000 until you reach roughly 0.000001. The number of folds involved is actually surprisingly small because the thickness of the paper increases exponentially, doubling the thickness

increase with each fold. I would take a little while to work this out mentally, without the aid of a calculator, but I just happen to know that it takes 51 folds to reach the sun from the earth,

*

and knowing that the moon is 400 times nearer than the sun, I can work out fairly instantly that it takes eight fewer folds to reach the moon. If you didn’t know, you’d simply have to work the answer out slightly more laboriously.

Folding paper has actually been the subject of serious mathematical analysis for over half a century. Some of the interest, naturally enough, has come from those masters of paper-folding, the Japanese, and the basic mathematical principles or axioms for folding, covering multi-directional origami folds as well as simple doubling, were established by Japanese mathematician Koshiro Hatori in 2001, based on the work of Italian-Japanese mathematician Humiaki Huzita.

Because of the exponential increase in thickness with each fold, it was widely believed that the maximum number of doubling folds possible in practice was seven or eight. Then in January 2002, American high school student Britney Gallivan proved this wrong in a project she did to earn an extra maths credit. First she managed to fold thin gold foil twelve times, and then, when some people objected that this wasn’t paper, succeeded in making the same number of folds in paper. Britney went on to devise

*

51 folds of 0.1 mm-thick paper would produce a wad 2.26 × 1011 metres thick, which is about the distance to the sun.

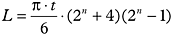

a formula for calculating the length of paper you need to achieve a certain number of folds (

t

is the thickness of the paper,

n

is the number of folds and

L

is the length):

| |

Using this formula, Britney showed that you could get further by folding lengthways, but that twelve was pretty much the practical limit for folding paper. So it would be impossible to get more than a metre or so off the ground in practice, let alone all the way to the moon.

(History, Cambridge)

If the question was ‘

Will

history stop the next war?’, the answer must be almost certainly not. There are wars being fought all over the world right now, and almost all of them have their roots in historical issues. Some of the historical issues are in the recent past; some are fuelled by ancient, yet still burning resentments; some are a mix of both. The conflict between Israel and Arab Palestine, for instance, finds its origins both in ancient tribal and religious differences and in the more recent nature of the division of Palestine in the wake of the Second World War. The war in the Congo stems partly from the legacy of European colonialism. And it’s highly likely that historical issues will play

a key role in whatever war starts next, whether it’s another skirmish between Georgia and neighbouring Russia, or between North Korea and the South.

However, the question asks ‘can’ history stop the next war

*

; in other words, might lessons learned from history reduce the chances of a war starting? It seems logical that they might. Surely people learn from their mistakes? The pessimist would say that there is no evidence that they do. If people did learn from history that war is a ‘bad thing’, then we would surely have seen the frequency and severity of wars decline throughout history as their appalling costs became clear. Yet the last hundred years have seen the most devastating wars of all time – and never a moment without conflict somewhere in the world. In some ways, you could say that the lesson people actually seem to have taken from history, despite what our moral side would like, is that war is not such a bad thing, or at least that it’s not so bad that it must be avoided in future. The costs seem never to

*

One of the interesting things about the Oxbridge questions is that they can be ambiguous, and often the key to providing a ‘clever’, original answer is to spot these ambiguities. Here, for instance, ‘can’ is an ambiguous word choice. The questioner could mean: has history the ability to stop the next war? And of course by itself it cannot; history is simply the story of what happened in the past. However, it’s a good bet the questioner is asking if lessons that people learn from history could stop the next war. It seems possible, but again the phrasing of the question implies that it almost certainly won’t. The next war is, by definition, a war that must start sometime, however near or far in the future, and it seems unlikely that lessons learned from history would ever stop a war once started.

have been so high that they have ever made embarking on another war inconceivable.

Yet there is a more optimistic way of looking at things. After the horror of the First World War, the victorious nations got together to form the League of Nations with the aim of preventing the outbreak of war in future. Yet they made the mistake of punishing Germany, the nation they held responsible for the war, too severely. The economic hardship and loss of national pride drove the Germans into the embrace of Hitler and took the world into an even more widespread and devastating war. After the Second World War, it seems enough people had learned the lessons of the previous disaster to avoid pressing the defeated Germany too hard. Indeed, the famous Marshall Plan helped to rebuild the German economy and trigger its remarkable post-war drive to prosperity and stability – a prosperity and stability which played a major part in undermining the attractions of communism in the east of Europe, and so helped to bring about the end of the Cold War.

People criticise the ineffectiveness of the United Nations, or its domination by the big nations of the Security Council, and yet the establishment of an international forum where nations can air their grievances before going to war is a lesson learned from history. Of course there have been many wars, large and small, since the Second World War – including the Korean, Vietnamese, Iran–Iraq and Gulf wars – and the UN itself has overseen the initiation of some

wars, such as the Kosovan conflict, the Afghan war and the invasion of Iraq.

However, it is entirely plausible to argue that the devastation of the two world wars has at least made the major powers stop to think before reacting to issues with a declaration of war, and may have kept conflicts regional rather than global. The rivalry between the Soviet Union and the USA during the Cold War, for instance, never escalated beyond the regional in a way such a rivalry might have done earlier. And it may be that the experience of the horror of the atomic bomb attacks on Japan in 1945 has been behind the determination of the major powers to avoid nuclear war or even major warfare – though of course the moral drawn by some of the American and Russian military from Hiroshima and Nagasaki was also that nuclear weapons are so powerful that they cannot afford to be without their own ‘superior’ versions. And here we come to the heart of this question.

History is nothing more than the story of the past, and there are as many interpretations of it as there people telling the story. It is certainly worth studying history to learn, in simplistic terms, from our mistakes, but there is not one single history teaching one clear lesson. The lesson many Germans learned from their defeat in the First World War was not to avoid war in future but to make sure they won the next time. Each of us draws our own lessons from history, and applies them in our own way.

And this leads to another problem raised by this question. Who is learning the lessons? Is it individual people? Is it politicians? Is it generals? Is it nations? And how do they put what they have learned into practice in a world that might fundamentally disagree with them, or simply have an entirely different agenda? Ultimately, then, it’s impossible to say if history, or rather lessons learned from history, can stop the next potential war; the responsibility belongs to myriad people and events in the here and now. This is not to say that studying history can teach us nothing. No, history may just provide the vital insights to the right people at the right moment that make it possible to avoid going down the same terrible path to war again. As Machiavelli said, ‘Whoever wishes to foresee the future must consult the past; for human events ever resemble those of preceding times. This arises from the fact that they are produced by men who ever have been, and ever shall be, animated by the same passions, and thus they necessarily have the same results.’

(Law, Cambridge)

Lawyers have so long been lampooned for their slipperiness and skill at exploiting legal niceties regardless of the truth that it’s tempting to say ‘nowhere’. Crooked or devious lawyers have been the stuff of stories for centuries.

In the words of the eighteenth-century English poet and dramatist John Gay:

I know you lawyers can with ease

Twist words and meanings as you please;

That language, by your skill made pliant,

Will bend to favour every client.

And of course there is an element of truth in this. Lawyers are often employed by clients to find a way to use the law to protect their interests, not to find the honest course. Viewed in this cynical way, a lawyer’s task is to negotiate a path through the thickets of legal restrictions, not to uphold the truth, or even ensure justice. A lawyer might, for instance, be employed to locate a loophole in the law that allows a client to get away with what, to an honest man, looks a lot like robbery.

Indeed, one way of looking at the law would be as a programme for society, providing automatic checks, controls and guidelines to keep society running smoothly and ensure good behaviour – or rather, behaviour that causes no conflict. Like a computer program, the law viewed this way is blind, and honesty becomes irrelevant. All that matters is compliance with the law, and lawyers are simply skilled operators of the program.

But this sci-fi Orwellian view of law, in which individuals are reduced to bit parts, is actually very different from the messy reality in which honesty does and must have a

role. It’s no accident that the very first thing a witness is asked when he or she steps into the witness box at a trial is the oath to tell ‘the truth, the whole truth and nothing but the truth’. This need for honesty is right at the heart of the law.

Of course, nearly all of us are dishonest in some way from time to time. For most, it’s no more than telling an occasional white lie. Only for a few is it a major crime. And this is the crux. A system of law is workable only because most people are essentially honest most of the time. If most were essentially

dis

honest, society could probably be kept stable only by military means, and the rule of law would be unworkable. However, if people were entirely honest all the time, laws would be largely unnecessary. All we would probably need were codes with guidelines to help people settle disputes, rather than enforceable laws. The force of law is needed to cope with the, fortunately rare, times when people are dishonest. In theory, it protects the majority of honest people from the minority of dishonest people. Enforceable laws are, of course, a restriction on our freedom but as the philosopher John Locke made clear, we enter a social contract, agreeing to these restrictions on our freedom in return for the protection from others’ dishonesty that laws provide.

The legal system would rapidly grind to a halt if we couldn’t trust that people are essentially honest most of the time – and the provision ‘most of the time’ is essential. We don’t simply rely on witnesses to tell the truth in court. We

rely on officers of the law to be honest, for instance – to tell the truth and not be swayed by undue influence and bribery. If they weren’t, the country would no longer be governed by laws but by power networks. And most legal documents carry the rider in ‘good faith’ – because it’s simply impractical to cover every eventuality. Similarly, if crime were too rife, the courts would become clogged and the legal system would break down.

The classic assumption underpinning the criminal justice system, ‘innocent until proven guilty’ assumes of course that most people are indeed honest. The burden is therefore on the legal system to prove that someone is dishonest, or worse. Imagine how uncomfortable it would be, and how difficult life could become, if officers of the law assumed that all of us were dishonest. That was the problem with the ‘sus’ laws in the UK that became so unpopular in the early 1980s because of the way they seemed to target racial groups that they were eventually abolished. Recent anti-terrorist legislation provokes the same problems.

But there is a problem with trust that is emerging in the legal systems of the UK and USA in particular. Although a belief in the fundamental honesty of most people is vital to the legal system, a strand of social, political and economic thinking emerged in recent decades that was in some ways opposed to this notion. Ideas such as game theory came to underpin the notion that people are, if not fundamentally dishonest, at least driven ultimately by self-interest to the point where honesty is irrelevant. The classic ‘prisoners’

dilemma’ in game theory

*

predicts that people must learn to become dishonest, and assume that other people are dishonest, if they are to survive and thrive. Such thinking has bubbled up in many places, from Dawkins’ notion of the ‘selfish gene’, to Mrs Thatcher’s infamous comment that ‘there is no such thing as society’, to Reaganomics, Tony Blair’s ‘targets’ for public sector employees – and most notoriously in the deregulation of the finance system.

But there is a problem for the law with this assumption that people are dishonest. Not only does it create distrust at best, and at worst paranoia, it means that the law begins to lack direction (except when it serves to persecute rather than prosecute). If lawmakers and enforcers start from the assumption that people are dishonest, then there is no guide through the thicket of what makes a good law and what a bad. It becomes hard to judge what is simply

*

In the famous prisoners’ dilemma of game theory, two suspects are arrested and imprisoned separately. With insufficient evidence, the police offer a deal. If either testifies against the other, he will be set free and the betrayed party will get a ten-year sentence. If they both remain silent, they each receive a six-month sentence. And if they each testify against each other, they each get five years. So what should you do if you were one of the prisoners? It would seem that the best ‘strategy’ is to assume that the other prisoner will betray you. If he does betray you, the worst you get is five years, and if he doesn’t, you go free. Many social theorists have gone on to assume that society must run on the same assumptions – that in reality people will make decisions in their own interest with no real reference to honesty. And so, this argument goes, the law must be based on the premise that people are fundamentally dishonest. The interesting thing, though, is that people conform to expectations.

protecting against likely dishonesty of the people and what is actually persecution or, in effect, martial law.

I wonder – though of course, this is sheer kite-flying – if this is one reason why the recent New Labour government in the UK, led by a party which has always been seen as the party of social justice, has sometimes seemed lacking in focus in legislation. I wonder, too, if an assumption that people are dishonest, and a legal system framed as if they are, actually helps to turn them that way …